Alloy Steel Microstructure

Highlights

Results

Details

Deep learning segmentation of alloy steel microstructures from SEM images under extreme data and texture ambiguity.

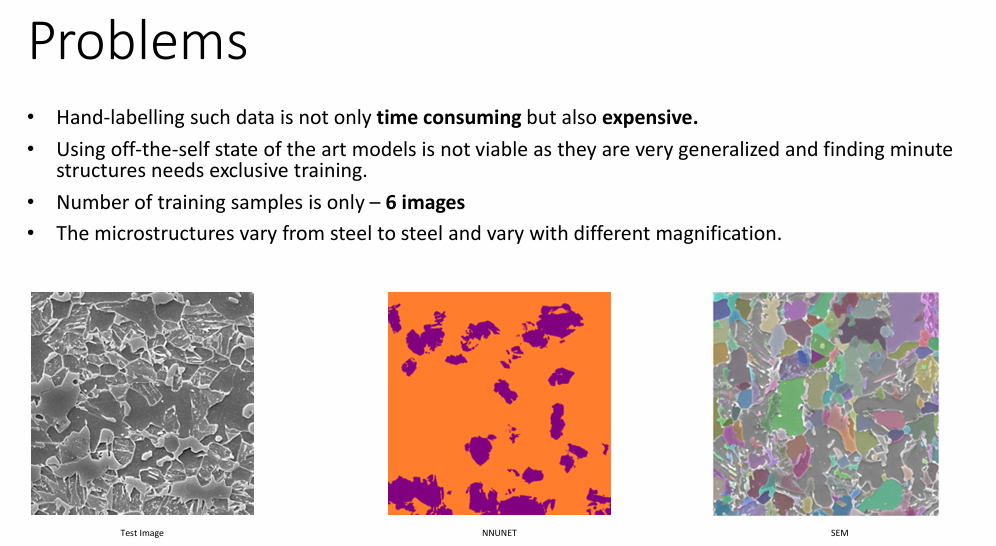

Problem

Quantifying alloy steel composition from scanning electron microscopy (SEM) images requires accurate segmentation of texture-dominated microstructures such as ferrite, bainite, and martensite.

Unlike object-centric vision tasks, these phases exhibit ambiguous local textures, weak boundaries, and strong global dependencies on material composition.

Key domain constraints:

- Pixel-level annotation is slow, expensive, and expert-driven

- Public datasets are extremely small (often < 50 images)

- Microstructures vary drastically across steel grades and magnification levels

- Local texture alone is insufficient to resolve phase ambiguity

Phase I — MDPI: Learning to Segment Under Extreme Data Scarcity

Goal: Establish a reliable deep learning baseline for multi-phase steel microstructure segmentation under limited data.

In our first study (MDPI Materials), we focused on answering a pragmatic question:

Can modern CNN-based segmentation models learn meaningful microstructural boundaries with very few SEM images?

Approach

- Dataset: Private SEM alloy steel dataset + MetalDAM benchmark

- Models: U-Net family, attention-based variants

- Strategy:

- Sliding-window training to compensate for small sample size

- Strong geometric and photometric augmentation

- Dice + Cross-Entropy loss to stabilize training

- Evaluation: Dice score and boundary accuracy across magnifications

Outcome

- Demonstrated that CNNs can segment alloy microstructures beyond manual heuristics

- Identified systematic failure modes:

- Texture confusion between visually similar phases

- Predictions drifting from physically valid phase proportions

- Crucially, segmentation quality alone was insufficient for downstream material analysis

This revealed a deeper limitation:

pixel-accurate segmentation does not guarantee compositionally correct results.

Phase II — WACV: From Segmentation to Composition-Aware Modeling

Key Insight:

In metallography, experts implicitly reason in terms of global phase ratios, not just local textures.

This motivated a shift in perspective:

Segmentation should be globally consistent with expected material composition.

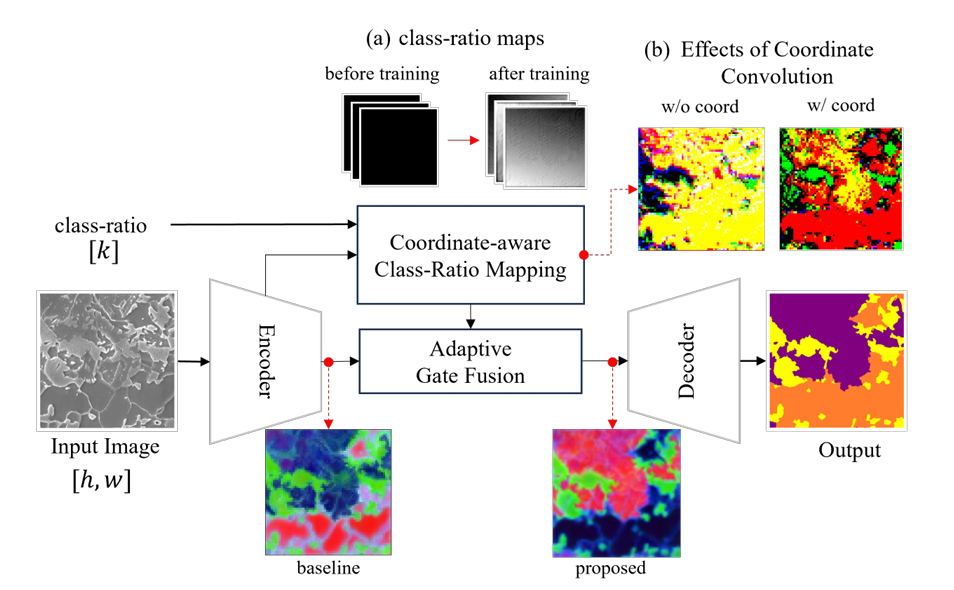

Core Idea

We introduced guided texture segmentation using class-ratio priors, formalized as:

- Coordinate-aware Class-Ratio Mapping (CCRM)

Converts global phase proportions into spatially aligned feature maps - Adaptive Gate Fusion (AGF)

Dynamically balances local image evidence with global ratio guidance

Rather than treating ratios as post-hoc statistics, the model is conditioned on composition during inference.

Key Contributions (WACV)

- Injects image-specific global knowledge into encoder–decoder models

- Resolves texture ambiguity by aligning local predictions with global constraints

- Works across CNNs, Transformers, and SAM-based models

- Adds < 2% parameters while delivering consistent Dice improvements

- Robust even when ratio estimates are noisy (≥ 60% accuracy)

- Introduces auto-regressive inference, removing reliance on expert input

Impact

- +6.1% average Dice improvement across architectures

- Clear separation of microstructural phases in embedding space

- Bridges the gap between computer vision segmentation and materials science requirements

Notes & Lessons Learned

- Texture segmentation fails when treated as a purely local problem

- Domain knowledge must be injected structurally, not via naïve concatenation

- Global composition acts as a powerful regularizer under data scarcity

- This project shaped my broader research direction toward:

- Guided learning

- Global–local consistency

- Memory and feedback mechanisms in vision systems

This line of work directly influenced my later research on memory-augmented and feedback-driven architectures for segmentation under uncertainty.